|

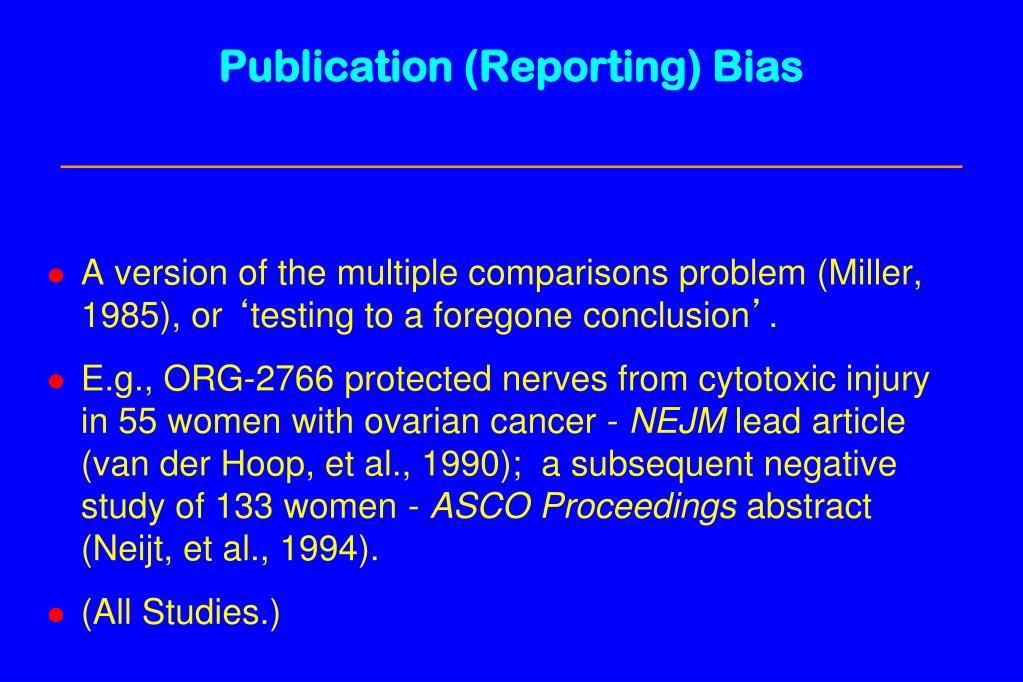

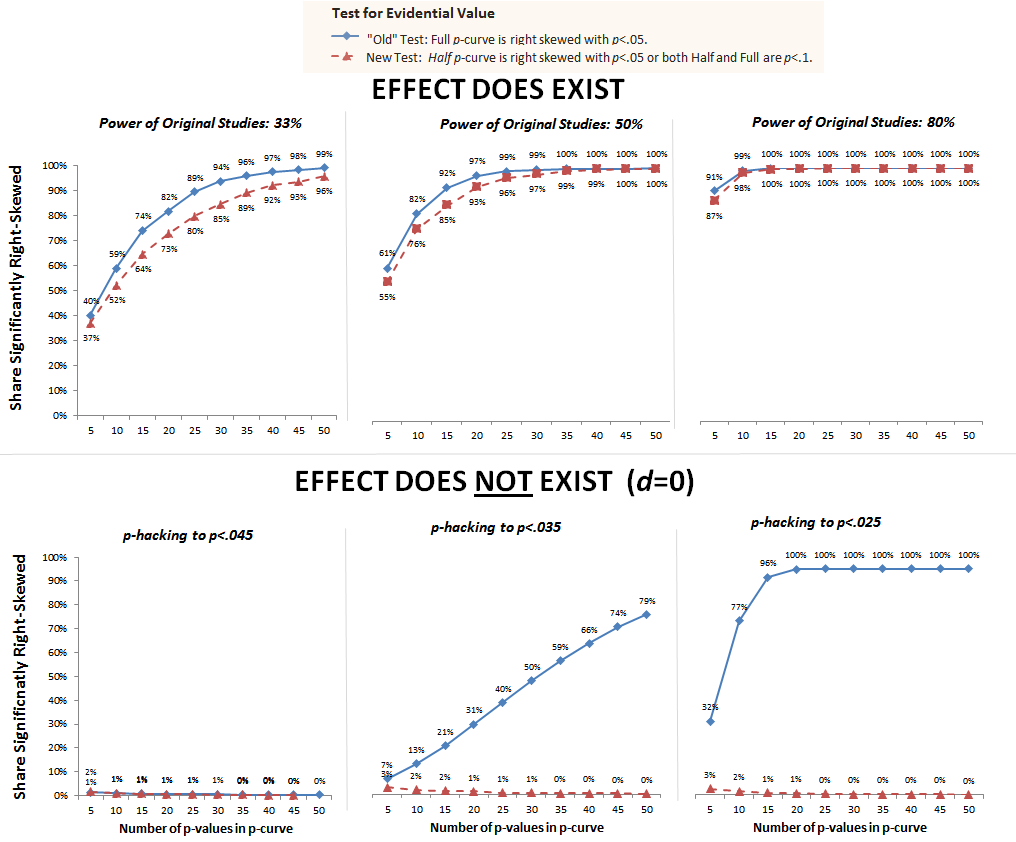

It is difficult to detect p-hacking, but being aware of it provides an extra line of defence against bad science.Let’s proceed with our parade of fraudulent data practices, shall we? Next up is data dredging (a/k/a “p-hacking”), a more sophisticated (and less transparent) form of cherry picking. A clear financial interest lying behind a study does not by itself invalidate the results, but it should be a reason for journalists to bring extra scrutiny to bear. It is also very important to look at the institution that carries out and funds the research, and to ask whether they are trustworthy.

Results which go against the majority of existing research on a topic should raise suspicion, as should extremely sensationalised findings. This is why it is so important to keep an eye out for red flags when seeing the results of a study being reported. With a plausible explanation for why the ‘hypothesis’ was proposed, results generated by torturing the data in this way are indistinguishable from genuine studies. P-hacking is particularly insidious because it can be so hard to detect. For this reason, p-hacking is considered to be highly unethical. Honest science demands that scientists go into their research with a clear, motivated hypothesis – for example, that we have reason to believe that this particular chemical causes this type of cancer, and we want to test this. In addition, you record the rate of going bald in the group over time.

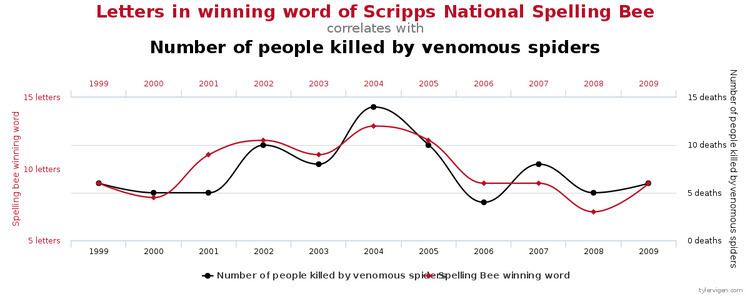

You could then get a group of 10,000 men (a pretty big sample size by all accounts) to report on their consumption of M&Ms, Twix and Mars Bars over a period of time. To take a toy example, suppose you wanted to establish a link between chocolate and baldness. In this case, they could use statistical testing to manufacture this result through selective reporting. Suppose they started off with the conclusion they want to reach, and were not particularly concerned with scientific ethics. Suppose, however, a scientist took the opposite approach. This is a baseline assumption of scientific testing: that the scientist forms a prior hypothesis based on the theory which they then put to the test. In the statistical significance post, the scientist went in with a well-motivated hypothesis which she put to the test in an experiment. It is one of the most common ways in which data analysis is misused to generate statistically significant results where none exists, and is one which everyone reporting on science should remain vigilant against. This piece introduces one such technique known as ‘p-hacking’.

To avoid reporting spurious results as fact and giving air to bad science, journalists must be able to recognise when such methods may be in use. However, there are ways in which statistical techniques can be misused and abused to show effects which are not really there. Most scientists are careful and scrupulous in how they collect data and carry out statistical tests. It is a misuse of data analysis to find patterns in data that can be presented as statistically significant when in fact there is no real underlying effect. This is a technique known colloquially as ‘p-hacking’. In this post, we will look at a way in which this process can be abused to create misleading results. In our explainer on statistical significance and statistical testing, we introduced how you go about testing a hypothesis, and what can be legitimately inferred from the results of a statistical test.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed